تأمين الطبقة العصبية: أمن الذكاء الاصطناعي للمؤسسات

Executive Summary

Enterprise AI is no longer a competitive differentiator — it is load-bearing infrastructure. And yet, the organizations deploying it at scale are doing so without the security architecture that load-bearing infrastructure demands. This guide defines the neural layer and provides a threat taxonomy for defending against prompt injection, model poisoning, and data exfiltration.

The New Attack Surface No One Is Auditing

There is a particular kind of institutional blindness that emerges when a technology moves too fast for governance structures to follow. Security teams are still refining their cloud posture while the next layer of exposure has already been deployed by product teams integrating LLMs into customer-facing workflows. That layer is the neural layer. And it is, by almost every enterprise security benchmark, critically under-secured.

Defining the Neural Layer in Enterprise Architecture

The neural layer refers to the ensemble of machine learning components, inference endpoints, model weights, training pipelines, embedding systems, and orchestration logic that collectively enable AI-driven functionality. This sits above the traditional application layer and includes foundation models, vector databases, and protocol runtimes. Each of these subsystems has its own threat model and attack surface.

The Threat Taxonomy: What You Are Actually Defending Against

A rigorous security posture begins with a rigorous threat model. The enterprise AI threat landscape encompasses distinct attack categories that require distinct defensive controls.

| Threat Class | Target | Attack Vector | Business Impact |

|---|---|---|---|

| Prompt Injection | Model inference | Malicious user input in fields | Data leakage, policy bypass |

| Data Poisoning | Model weights | Corrupted training datasets | Systematic model misbehavior |

| Exfiltration | Training data | Statistical reconstruction | PII and IP exfiltration |

| Supply Chain | Third-party models | Malicious pre-trained weights | Backdoor deployment |

| Jailbreaking | Safety guardrails | Social engineering | Harmful output, reputational risk |

Prompt Injection and Adversarial Inputs

Prompt injection is the neural layer equivalent of SQL injection. It can be direct (manipulating user-facing input) or indirect (embedding malicious instructions in retrieved data). Effective mitigation requires structural prompt isolation, input sanitization pipelines, least-privilege prompting, and output validation layers.

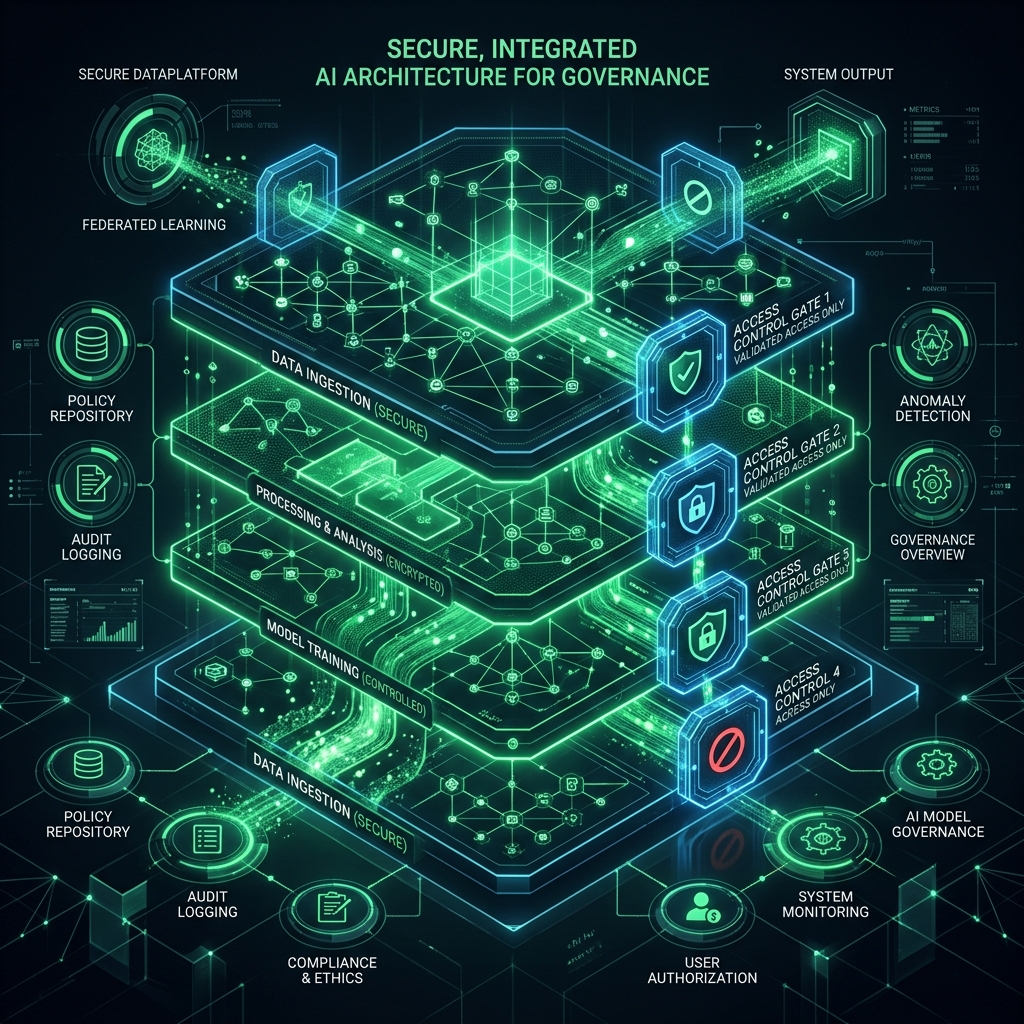

Governance Frameworks for Enterprise AI Safety

Sustainable AI safety requires formalized governance structures. Technical controls without governance are tactical countermeasures, not strategic postures. This includes inventory and classification, ownership accountability, and lifecycle governance.

Conclusion: The Architectural Imperative

The question is not whether your AI systems will be tested by adversarial conditions—they will be. The question is whether the architecture you built will hold. Neural layer security is not a coating; it is a structural property of systems that are built to last. EVDOPES engineers digital infrastructure for organizations that cannot afford the cost of architectural failure. Precision is the constraint.