الذكاء الاصطناعي الخفي: المخاطر المستترة في منظمتك

Executive Summary

Estimated Read Time: 14 minutes Shadow AI is not an emerging risk—it is an already active, unaccounted system layer operating beneath your organization’s official architecture. Left unmanaged, it silently fragments data integrity, erodes governance, and introduces decision-making pathways your leadership cannot see, audit, or control.

The System You Didn’t Design Is Already Running

Shadow AI refers to the unauthorized, ungoverned use of artificial intelligence tools within an organization—typically adopted by employees seeking speed, efficiency, or competitive advantage outside sanctioned systems.

Unlike traditional shadow IT, Shadow AI is not limited to infrastructure or software usage. It directly influences cognition, decision-making, and output generation. It does not just process data. It reshapes it.

This distinction is critical.

When an employee uses an external AI system to draft proposals, analyze financial data, generate code, or summarize internal reports, they are effectively outsourcing a portion of the organization’s intellectual process to an external, opaque model. That process is rarely logged, audited, or secured. The result is a parallel intelligence layer—fast, productive, and completely unregulated.

Why Shadow AI Emerges in High-Performance Environments

Speed gaps create unauthorized systems

Shadow AI is not born from negligence. It emerges from latency—organizational latency. When internal systems are slow, restricted, or overly bureaucratic, high-performing individuals will route around them. AI tools offer immediate leverage: faster writing, faster coding, faster analysis, faster ideation.

In environments where execution speed is tied to performance, employees will prioritize output over compliance. This is the first architectural failure: when official systems cannot compete with external tools, governance loses by design.

Accessibility outpaces policy

AI platforms are frictionless to access. No procurement cycles, no integration requirements, no onboarding overhead. A browser is sufficient. Policy, on the other hand, is slow. Legal review, security validation, compliance frameworks, and internal approvals operate on timelines incompatible with the pace of AI adoption.

This asymmetry creates a predictable outcome: usage precedes authorization. By the time leadership formalizes an AI policy, the organization is already deeply dependent on tools it has not evaluated.

The Core Risk Categories of Shadow AI

Data leakage is the most visible risk—and the least understood

When employees input sensitive information into external AI systems, they may unknowingly expose proprietary business data, client information, financial models, internal strategies, source code and system architecture, legal documents and confidential communications.

The assumption that these systems are “private” is often incorrect or, at best, conditional. Even when providers claim data isolation, the organization has limited visibility into how data is stored, processed, or retained. The deeper issue is not just leakage. It is loss of control. Once data leaves the organization’s controlled environment, it is no longer governed by internal security protocols. It becomes subject to external policies, jurisdictions, and technical architectures.

Model hallucination creates silent decision risk

AI systems generate outputs that appear coherent, authoritative, and complete—even when incorrect. In a Shadow AI context, this creates a dangerous dynamic: Employees trust outputs because they accelerate workflows; Outputs are rarely verified under time pressure; Decisions are made based on synthetic or partially incorrect information.

Unlike traditional errors, hallucinations are not always obvious. They are structurally plausible. This introduces a new class of risk: decisions that are wrong, but indistinguishable from correct ones at first glance.

Fragmentation of knowledge systems

Organizations rely on structured knowledge flows—documented processes, shared repositories, standardized tools. Shadow AI disrupts this by creating isolated pockets of generated knowledge: Insights generated in private chats are not stored centrally; Outputs are not version-controlled; Learnings are not shared across teams; Decision logic becomes non-reproducible.

Over time, this fragments institutional knowledge. The organization loses the ability to trace how conclusions were reached, which data was used, and what assumptions were embedded. This is not just inefficiency. It is epistemic drift—the gradual loss of a shared understanding of truth within the organization.

Compliance and regulatory exposure

In regulated industries, Shadow AI introduces immediate compliance risks: Unauthorized data processing may violate data protection laws; Lack of audit trails undermines accountability; AI-generated outputs may fail regulatory standards; Cross-border data transfer may occur without oversight.

The issue is not hypothetical. Regulatory frameworks are evolving rapidly, and organizations that cannot demonstrate control over AI usage will face increasing scrutiny. Shadow AI creates exposure not because AI is inherently non-compliant, but because its usage is invisible.

Security attack surface expansion

Every external AI tool integrated into workflows becomes a potential attack vector. Risks include: Prompt injection attacks that manipulate outputs; Malicious data extraction through crafted inputs; Compromised third-party platforms; Unauthorized API usage; Credential leakage through integrated tools.

Traditional security models are not designed for AI-mediated interactions. The interface itself becomes a new boundary—one that is often unmonitored.

| Risk Category | Technical Failure | Organizational Impact |

|---|---|---|

| Data Integrity | Leakage to external models | Loss of IP and competitive advantage |

| Governance | Non-deterministic logic | Non-reproducible decision paths |

| Knowledge | Private chat silos | Erosion of institutional truth |

| Compliance | Unmonitored data flows | Regulatory exposure |

The Psychological Drivers Behind Shadow AI

Efficiency addiction

AI tools provide immediate reward: tasks that once took hours are completed in minutes. This creates a feedback loop where employees become dependent on the speed advantage. Once this dependency forms, removing or restricting access feels like a productivity loss—even if the underlying risks are significant.

Perceived harmlessness

Unlike downloading unauthorized software or accessing restricted systems, using AI tools feels benign. It resembles searching the web or using a calculator. This perception lowers caution. Employees do not view their actions as risky because the interface is familiar and the output is useful. The danger lies in this normalization. Shadow AI is not perceived as shadow activity.

Cognitive outsourcing

AI does not just accelerate execution. It reduces cognitive load. Employees begin to rely on AI for structuring thoughts, generating ideas, and validating assumptions. Over time, this shifts the locus of thinking from internal reasoning to external generation. In isolation, this may seem efficient. At scale, it alters how the organization thinks.

Architectural Consequences

Loss of system integrity

Enterprise architecture is built on controlled systems, defined data flows, and predictable interactions. Shadow AI introduces uncontrolled variables: Unknown data inputs; Unverified processing logic; Non-deterministic outputs; External dependencies.

This breaks the assumptions underlying system design. The architecture no longer reflects reality.

Inability to audit decision pathways

In high-stakes environments, decisions must be traceable. Leadership must understand how conclusions were reached. Shadow AI disrupts this by inserting opaque steps into the decision chain. If an analysis was partially generated by an external model, and that interaction is not logged, the organization loses visibility. This is not just a technical issue. It is a governance failure.

Misalignment between official and actual workflows

Documented processes become obsolete when employees adopt faster, unofficial alternatives. This creates a divergence: Official workflows reflect policy; Actual workflows reflect behavior. When these diverge too far, policy loses relevance. The organization operates on an undocumented system layer that leadership does not control.

Strategic Response: Control Without Friction

Eliminate the speed gap

The most effective way to reduce Shadow AI is not prohibition. It is replacement. Organizations must provide internal AI systems that match or exceed the speed and usability of external tools. If internal solutions are slower, more complex, or less capable, employees will continue to bypass them. Performance is governance.

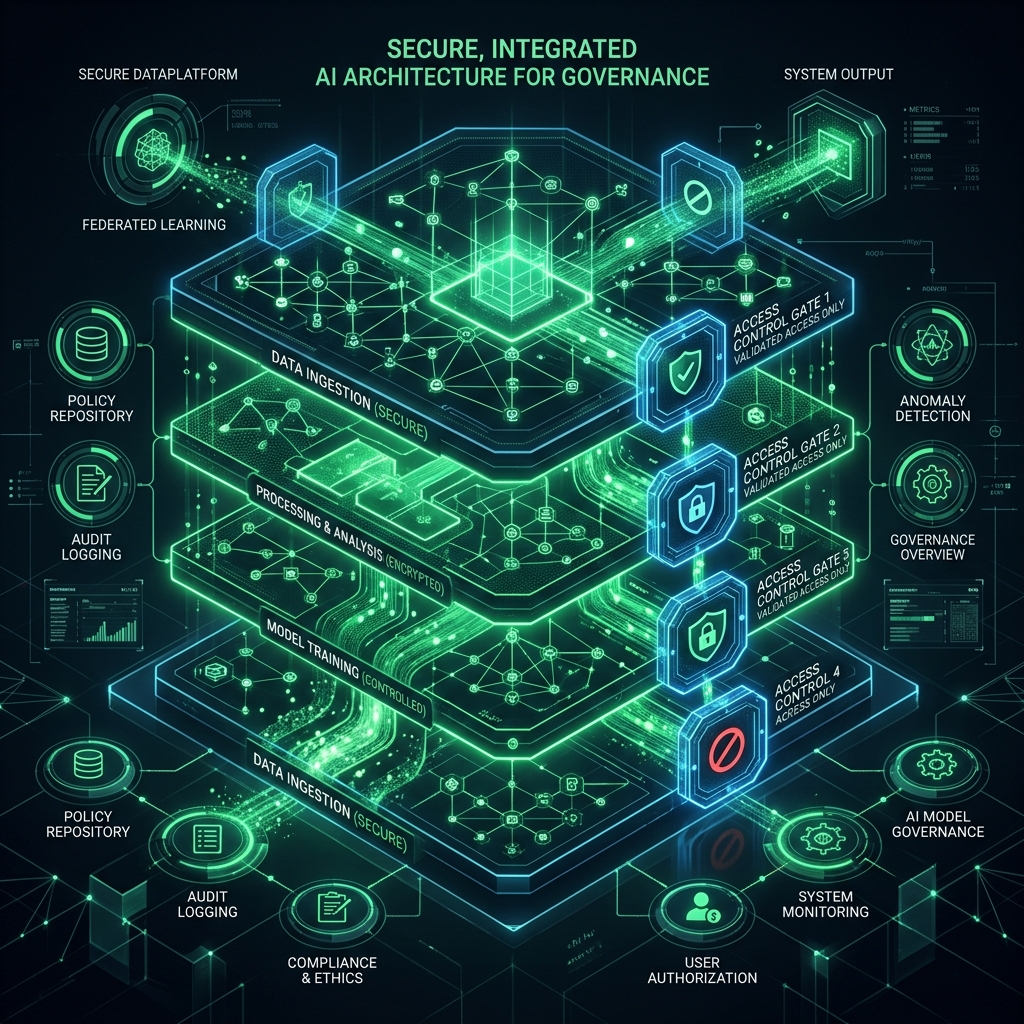

Build sanctioned AI infrastructure

- ▸Secure, internal or private-model deployments

- ▸Controlled API gateways

- ▸Data governance layers that restrict sensitive inputs

- ▸Logging and audit trails for all interactions

- ▸Role-based access controls

Define clear usage boundaries

Policies must be precise, not abstract. Employees need to understand: What data can and cannot be shared; Which tools are approved; What verification steps are required; How outputs should be validated. Ambiguity creates loopholes. Precision creates compliance.

Monitor behavior, not just systems

Shadow AI cannot be managed solely through infrastructure. It requires behavioral visibility. This includes: Monitoring tool usage patterns; Identifying unsanctioned platforms; Understanding workflow deviations; Mapping where AI is influencing decisions. The objective is not surveillance. It is situational awareness.

Train for judgment, not just tools

AI literacy is not about teaching employees how to use tools. It is about teaching them when not to trust them. Organizations must develop: Critical evaluation skills for AI outputs; Awareness of hallucination risks; Understanding of data sensitivity; Discipline in verification. Without this, even sanctioned AI systems can introduce risk.

The Future State: Integrated Intelligence, Not Shadow Systems

AI must become part of the architecture, not an external layer

The long-term solution is not containment. It is integration. AI should operate within the organization’s controlled environment, aligned with its data models, security protocols, and decision frameworks. It should enhance existing systems, not bypass them. When AI is properly integrated, it becomes an amplifier of organizational intelligence—not a parallel system.

Visibility is the new control layer

In a world where AI interactions shape decisions, visibility becomes critical. Organizations must be able to answer: Where is AI being used? What data is processed? How are outputs influencing decisions? What risks are being introduced? Without visibility, control is impossible.

Conclusion: What You Cannot See Will Reshape Your Organization

Shadow AI is not a future threat. It is an active, evolving layer within modern organizations—one that operates faster than governance, quieter than policy, and deeper than most leaders realize. The risk is not just data leakage or compliance failure. It is structural: the gradual loss of control over how information is created, processed, and trusted.

Organizations that ignore Shadow AI will not simply fall behind. They will lose coherence. Their systems will no longer reflect their operations, and their decisions will no longer be fully understood. Precision demands visibility. Performance demands control. In high-performance environments, intelligence must be engineered—not improvised.